What do we do?

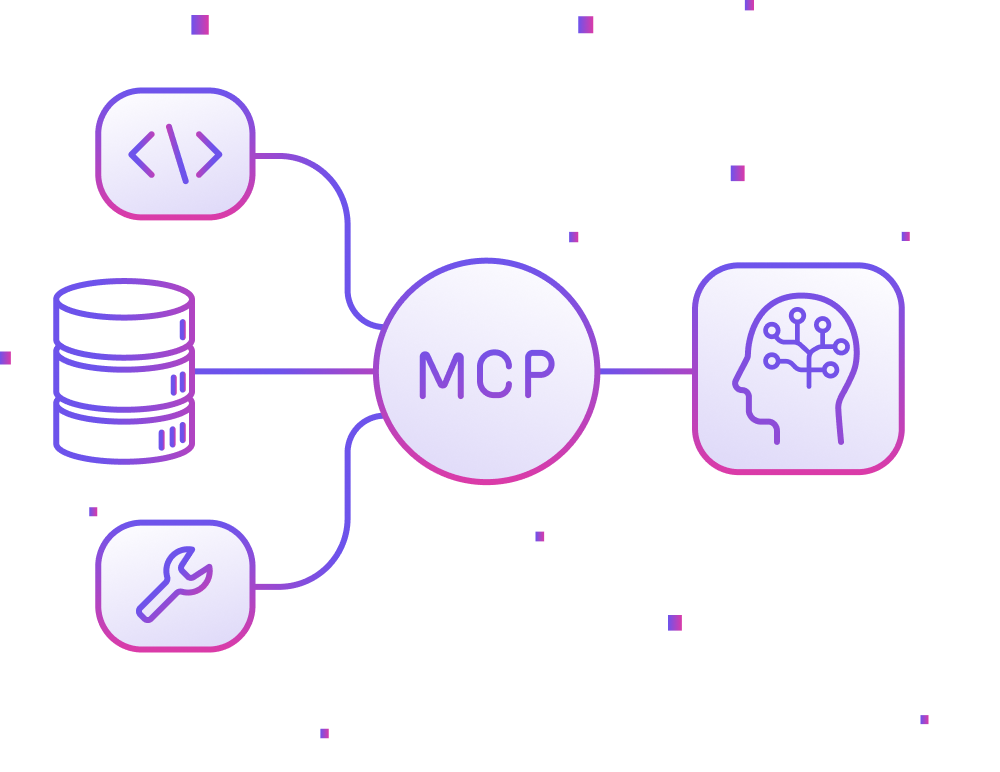

Connecting Intelligence to Action

MCP server development services connect AI agents directly to the tools your business depends on, not in demos but in live enterprise production environments.

Live Integrations, Across Your Stack

We’ve already deployed MCP integrations in real enterprise environments across Google Workspace, Microsoft 365, Slack, Cisco, QuickBooks, Salesforce, and Jira. We don’t prototype MCP. We deliver it in production.

Claude, GPT-4o, Gemini, All Three Unified

We build MCP servers compatible with all major AI platforms. Claude natively. GPT-4o via function-calling adapter. Gemini 2.5 via Google's official MCP SDK and Vertex AI. One integration layer. Any model.

Design-Led AI Engineering

Terralogic pairs AI enterprise integration services with UX design from Lollypop Design Studio. We build agents that are as intuitive as the infrastructure powering them. Expert backend meets human-centric design.

One MCP Integration Layer for All Major AI Platforms.

Claude, GPT-4o, and Gemini power today’s enterprise AI landscape. We build MCP servers that work seamlessly across any LLMs, giving you a single custom MCP server build that supports any model your architecture requires.

Claude Sonnet 4.6 · Opus 4.6

Native

GPT-4o · GPT-4 Turbo

Adapter

Gemini 2.5 Pro · Flash

Official SDK

Llama 3 70B · 8B

Self-hosted

What we build

What Each MCP Integration Enables Your AI to Do

These aren't concepts or demos. These are the production integrations we have built and deployed, giving your AI structured, authenticated access to live enterprise systems and the ability to act.

Google Workspace MCP

Our MCP layer allows agents to read Gmail threads, find the right Drive document, check Calendar availability, run a BigQuery query, and draft a Docs response, all in a single reasoning chain. Works with Gemini (native integration), Claude, and GPT-4o.

Microsoft 365 MCP

Built for Microsoft enterprise, this integration grants AI secure access to your Outlook, Teams, SharePoint, and OneDrive files with Microsoft Entra ID (Azure AD) authentication and role-based access controls.

Slack & Cisco MCP

Scale your operations with intelligent NOC & IT automation that monitors Slack channels to trigger automated workflows and post updates. From Cisco networking incidents to infrastructure alerts, our agents ensure critical data is escalated to the right humans.

QuickBooks MCP

Our finance agents monitor live access to QuickBooks, invoices, expenses, payroll, and vendor records, cross-reference POs, flag anomalies, and route exceptions to human reviewers with full compliance-grade audit logs.

Salesforce & CRM MCP

When given access to live Salesforce accounts, leads, opportunities, and activity history, with field-level write controls, AI can update records and log interactions without ever touching sensitive, off-limit data.

Jira & Confluence MCP

AI agents that bridge the gap between reasoning and action. They assess incoming Jira tickets, update sprint boards, search Confluence for documentation, and summarize project status in real-time.

Discovery to Production in 5 Weeks

Every custom MCP server build starts with clarity: your tools, systems, access patterns, and security requirements. No guessing. No rework after handover. A system built to run your environment from day one.

Week 01

Systems & Requirements Discovery

Map your tools, APIs, and data sources. Define access scope and auth methods for each. Select the right LLM: Claude, GPT-4o, or Gemini. Deliver the architecture spec and build estimate.

Weeks 02–03

MCP Server Build

Typed tool definitions and resource schemas. Authentication layer per integration (OAuth2, service account, JWT). Error handling, rate limiting, and audit logging from day one.

Week 04

Testing & Security Review

Validate against your target LLM. Test tool call accuracy, context handling, and edge cases. OAuth2 token refresh testing. Security review of all access controls and data exposure paths.

Week 05

Deploy & Handover

Production deployment to your infrastructure. Complete documentation, runbooks, and team handover. Retainer available for new integrations and model updates.

We have covered all industries across the domain

From startups to known brands we have many stories to tell

-

Real Estate

-

Accounting

-

Fintech

-

Healthcare

-

Retail

-

Insurance

-

Automotive

-

Government

-

Edutech

-

Manufacturing

-

SM Business

-

E-Commerce

Frequently Asked

Questions

Does Google Gemini support MCP?

Yes. Google officially supports MCP via the Gemini Python and JavaScript SDKs, the Gemini CLI, and Google Cloud-managed remote MCP servers for BigQuery, Google Maps, Compute Engine, and Kubernetes Engine. Terralogic builds MCP servers for Gemini 2.5 Pro and Gemini 2.5 Flash on Vertex AI using Google OAuth2 and service account authentication. The same MCP server layer can serve Claude, GPT-4o, and Gemini—the model is a configuration choice, not an architectural constraint.

What enterprise systems has Terralogic already integrated via MCP?

Terralogic has delivered live MCP integrations—currently running in enterprise production—connecting AI agents to Workspace (Gmail, Drive, Calendar, BigQuery, and and Docs); Microsoft 365 (Outlook, Teams, SharePoint, and OneDrive); Slack; Cisco (Webex and networking infrastructure APIs); QuickBooks (accounting, invoicing, expenses, and payroll); Salesforce (CRM and leads); Jira and Confluence (tickets, sprints, and documentation); and custom internal enterprise REST and GraphQL APIs. Each integration includes OAuth2 or service account authentication, rate limiting, and full audit logging.

Can the same MCP server work with Claude, GPT-4o, and Gemini?

Yes. MCP is a model-agnostic protocol. Claude supports it natively. GPT-4o and Gemini connect via adapter layers that translate MCP tool definitions to their respective function-calling formats. Terralogic builds MCP servers that are compatible across all three platforms, so the same backend integration can serve different AI models depending on the use case or team preference.

How is Terralogic different from other MCP development companies?

Terralogic has delivered live MCP integrations to Google Workspace, Microsoft 365, Slack, Cisco, QuickBooks, Salesforce, and Jira that are running in production today—not reference implementations or demos. Terralogic also pairs AI engineering with UX design from Lollypop Design Studio, delivering the complete AI experience from server infrastructure through to the human interface. Most MCP developers provide backend plumbing alone. Terralogic ships the full system.

How long does MCP server development take?

Terralogic’s MCP Discovery Sprint takes 1 week and delivers a complete architecture specification, model recommendation (Claude, GPT-4o, or Gemini), integration map, and build estimate. The build and deploy phase takes 4–10 weeks, depending on the number of systems being connected and the authentication complexity. The total timeline from first conversation to production is typically 5–12 weeks.

What makes Anthropic a strong choice for MCP?

Anthropic offers native MCP support with first-class tool definitions, structured resource access, and prompt templates built directly into the Claude ecosystem. This results in highly predictable and reliable tool-calling, especially in complex, multi-step reasoning workflows and long-document processing. It is particularly strong where determinism, safety, and structured planning are critical. (Cloud-only deployment, supports OAuth2, API key, and JWT.)

When should you choose OpenAI for MCP workflows?

OpenAI is ideal when you need robust multimodal capabilities (text, image, audio), strong developer tooling, and deep Microsoft ecosystem integration. MCP is implemented via an adapter layer, translating MCP tools into function-calling, which works reliably in production. It’s a strong choice for teams already invested in Azure, Microsoft 365, or existing OpenAI-based stacks and for use cases involving code generation, assistants, and cross-modal workflows. (Supports OAuth2 and API key, available via Azure OpenAI.)

Why use Google for MCP-based systems?

Google provides official MCP support through Gemini SDKs (Python/JS) and CLI, along with managed MCP servers for services like BigQuery, Maps, GKE, and Compute Engine. This makes it especially powerful for data-intensive workflows, analytics agents, OCR/document processing, and Google Workspace-native use cases. Its tight integration with Vertex AI and Google Cloud services enables scalable, production-grade deployments with strong identity and access controls. (Supports OAuth2, service accounts, and IAP.)

When is Meta the right choice for MCP deployment?

Meta is the right choice when data privacy, infrastructure control, and cost efficiency at scale are key priorities. MCP is implemented via an adapter on self-hosted inference stacks such as vLLM, Ollama, or TGI, allowing full control over model deployment. This makes it ideal for regulated industries, air-gapped environments, or high-volume workloads where data cannot leave internal systems. (Supports internal authentication and mTLS, with full on-premise deployment.)

What exactly is Terralogic delivering — a prototype or a production-ready system?

Terralogic delivers a fully production-ready MCP system, containerized and deployed in your infrastructure—compatible with Claude, GPT-4o, and Gemini. This is not a proof of concept but a system your team can operate and scale.

How are MCP tools defined to ensure reliability across models?

We don’t believe in “one-off” fixes. We use strictly typed, versioned definitions with rock-solid input/output schemas. This ensures that whether you’re running GPT-4o, Claude 3.5, or a local Llama model, the tools behave exactly the same way without you having to re-engineer the wheel every time you switch.

How is authentication handled across different integrations?

Security shouldn’t slow you down. We’ve built a flexible auth layer that manages the “messy” parts—OAuth2, JWTs, and service accounts—automatically. We handle token refreshes and per-user credential isolation out of the box, so your data stays locked down but accessible.

How does Terralogic ensure security, compliance, and auditability?

We bake compliance directly into the MCP layer. Every interaction is timestamped and logged—inputs, outputs, and user identities, giving you a bulletproof audit trail. Add in our built-in rate limiting, and you’ve got a system that’s as secure as it is scalable.

How does Terralogic ensure the system is reliable before deployment?

We don’t guess; we validate. Before deployment, we run a heavy-duty integration suite that stress-tests everything from tool accuracy to timeout handling and edge cases. We test it against your specific LLM environment to ensure that when you go live, the system actually holds up under pressure.

What kind of documentation and handover does Terralogic provide?

Terralogic provides complete, transparent documentation — including architecture, tool references, authentication setup, deployment runbooks, and maintenance guides — ensuring your team can fully own and extend the system.

Let's Craft Brilliance

Just exploring? Let's think out loud together. We would love to hear from you. Come, let's get started!